Update: On May 31, 2019, the US Dept of Education finally released their findings after waiting 3 1/2 years, showing that indeed Eva Moskowitz had violated FERPA by posting online the details from the files of Fatima Geidi’s son and sending them to reporters, and then again when she included them in her book. Yet they didn’t penalize her or the school or even require that she omit theses details from her book, merely schedule some trainings in FERPA. The Daily News covered this story and reported that Eva Moskowitz plans to appeal the decision. The story was also reported in Education Week and Politico. More here.

Update: On May 31, 2019, the US Dept of Education finally released their findings after waiting 3 1/2 years, showing that indeed Eva Moskowitz had violated FERPA by posting online the details from the files of Fatima Geidi’s son and sending them to reporters, and then again when she included them in her book. Yet they didn’t penalize her or the school or even require that she omit theses details from her book, merely schedule some trainings in FERPA. The Daily News covered this story and reported that Eva Moskowitz plans to appeal the decision. The story was also reported in Education Week and Politico. More here.

This is cross-posted at the NYC Public School Parents blog.

On May 4, 2019 , the NY Daily News ran an article about the plight of Lisa Vasquez and her autistic daughter Jazmiah who was pushed out of a Success Academy charter school; Success also repeatedly threatened to call the city’s Administration of Child Services on Ms. Vasquez. Her daughter has now been out of school for 18 months. The NYC Department of Education has failed to place her in any setting that provides her the services she needs, and refuses to pay for the private school that an impartial hearing officer has agreed would be appropriate.

The same day, the media outlet Chalkbeat ran a longer story about this family’s predicament. While answering questions from Chalkbeat reporter Alex Zimmerman, Success school officials showed him detailed confidential records from the student’s files, including “including progress reports, contemporaneous notes from multiple educators and psychologists, and a copy of her learning plan.”

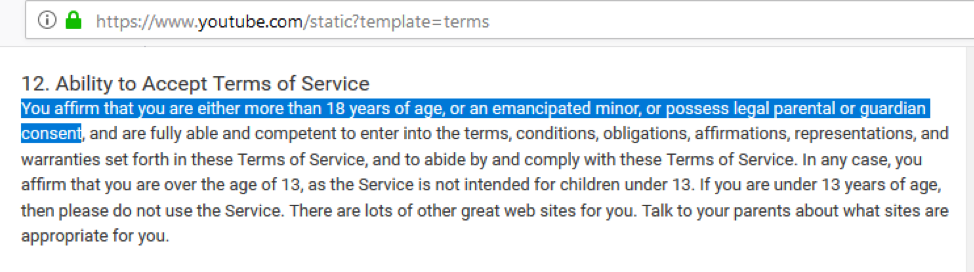

This is a clear violation of the Family Educational Rights and Privacy Act, also known as FERPA. Though Success Academy officials claim they had the right to “rebut false claims without violating FERPA when a parent has chosen to go the press,” there is no such provision in FERPA.

I contacted Ms. Vasquez through her attorney, and offered to help her file a FERPA complaint. She accepted and on May 9, she sent it to the US Department of Education. The complaint is below. We also filed a complaint with the NY State Education Department Chief Privacy Office, as this disclosure also violates NY State Education law 2D, the student privacy law passed in 2014 as a result of the controversy over inBloom.

What’s especially infuriating about these events is that Success Academy and its CEO, Eva Moskowitz, have been using these same illegal tactics for years to retaliate against families who dare criticize the way her schools treat students.

In October 2015, after Fatima Geidi was interviewed on a PBS News Hour show by John about how her son was mistreated by the principals and teachers at Success Academy, Eva Moskowitz sent a letter with details of his records, full of trumped up offenses, to every education reporter in the nation, and posted it on the Success website.

Fatima filed a FERPA complaint on Oct. 30, 2015, more than three years ago, a complaint that she is still waiting for the US Department of Education to respond to, though the Director of the Student Privacy Policy Office Michael Hawes told me the investigation into her complaint was essentially complete months ago. What is worse is that because of the long delay in responding, Moskowitz subsequently wrote a book in 2017 published by Harper Collins, containing many of the same false allegations against Fatima’s son, a book that is still sitting on the shelves and in libraries throughout the nation.

Moreover in at least five Success Academy charter schools, SAC Cobble Hill, SAC Crown Heights, SAC Fort Greene, SAC Harlem 2, and SAC Harlem 5, FERPA violations were noted by the SUNY Charter Institute during 2016 site visits, as noted in their Renewal reports. In each of these Renewal Reports, the same observation is made:

“The Institute and school worked cooperatively to correct minor infractions at the site visit regarding Family Educational Rights and Privacy Act (“FERPA”) wherein the intent of the school was laudable but technically a violation…”

I wouldn’t necessary assume that the intent of these school officials was laudable – especially given the SUNY Institute’s tendency to rubberstamp renewals and ignore all the many federal and state lawsuits against Success Academy, but I do find it interesting that they felt compelled to note these violations in their reports in any case.

Clearly Eva Moskowitz and Success Academy officials remain intent on ignoring federal law and violating the privacy of students – and continue to get away with it because of inaction from the federal government.

Last October, the Inspector General’s office released a scathing audit of the US Department of Education’s record in responding to FERPA complaints. The IG office reported that there were 344 open investigations as of May 2018, with many more pending complaints including some two years old for which no decision had yet been made as to whether to investigate or not. No that no systematic process existed for even tracking and calculating how many complaints went unresolved over time. They wrote that “The Privacy Office is not meeting its statutory obligation to appropriately enforce FERPA and resolve FERPA complaints,” and they required a corrective action plan. This audit was reported on in Ed Week and other publications.

The US Dept of Education wrote a response to the audit, detailing how they would reform their process. Michael Hawes was appointed the new Director of Student Privacy Policy to clean up the mess.

On Friday, Michael Hawes left the Department of Education to join the Census Bureau, but before he left he told me that the active investigations into Fatima’s two complaints had been completed for some time, and a “findings letter” written, but that the letter could not be released because it had not yet been approved by senior leadership at the Department of Education. A timeline of these events is below.

October 12, 2015: PBS News Hour runs a segment with an interview of Fatima Geidi and her son.

October 19, 2015: Ann Powell, VP of Public Affairs and Communications at Success Academy Charter Schools, sends out a media release to reporters, which includes a long letter from Eva Moskowitz to Judy Woodruff of PBS that includes personally identifiable information from the child’s education records. The letter is also posted the same day on Success Academy website. The letter by Ms. Moskowitz includes an email from John Merrow of PBS, in which he writes that Fatima “was unwilling to release [my] son’s records.” Eva Moskowitz herself admits in her letter that Fatima was “refusing to waive her son’s privacy rights.”

October 22, 2015: Fatima sends a cease and desist letter to Eva Moskowitz, demanding that she remove the letter to PBS from the Success website containing false disciplinary charges against her son,as well as a second follow up letter she had sent concerning her son on October 21.

October 23, 2015: Eva Moskowitz responds with a letter to Fatima, saying she had a “constitutional right to speak publicly to set the record straight about the reasons that your son received suspensions.”

October 29, 2015: NY Times reports on the infamous “Got to go” list composed by a principal at a Success charter school, specifying the children he would try to push out of the school.

October 30, 2015: Fatima files her initial FERPA complaint, which is covered in several publications, including Slate.

November 19, 2015: Along with Zakiyah Ansari of the Alliance for Quality Education, Fatima meets with Ebone Woods and David Krieger from the Office of Civil Rights of the US Dept. of Education in NYC to deliver a petition with thousands of signatures about Success Academy’s excessive suspensions and disparate treatment of black and Latino students which contributes to the school to prison pipelines. They urge the federal government to stop funding the charter chain, which received $37 million in federal grants since 2010, including $13.4 million this past year.Fatima also submits a formal civil rights complaint about her son’s treatment by the school.

December 1, 2015: Fatima receives a letter from OCR confirming that Success Academy is under investigation. At about that time or shortly thereafter, Eva Moskowitz removes the details of Fatima’s child’s records from the Success website

January 22, 2016: Many more parents file a federal complaint with the US Department of Education Civil Rights office, accusing the Success network of charter schools of discriminating against students with disabilities. Officials in that office tell them Success is already under investigation. This new complaint is reported in the NY Times and elsewhere. .

September 2016: SUNY Charter Institute notes unspecified violations of FERPA at several Success charter schools.

October 20, 2016: A full year has gone by without any response from the US Department of Education to Fatima’s complaint.

November 16, 2016: President-elect Donald Trump interviews Eva Moskowitz for a job as Secretary of the US Department of Education. The next day, she says she would decline the position if offered it but that she supports Trump’s “strong support for school choice.”

November 18, 2016: Ivanka Trump visits a Success Academy charter school.

September 12, 2017: Eva Moskowitz publishes the same chronicle of trumped-up allegations against Fatima’s son in a book published by Harper Collins.

September 28, 2017: The US Department of Education awards $6,130,200 to Success Academy charter schools to further expand their schools.

December 7, 2017: More than two years later, Fatima receives a letter from the US Department of Education, saying they are now ready to investigate her FERPA complaint from Oct. 31, 2015.

December 14, 2017: Success illegally releases information to a reporter from another child’s records, a first grader after his mother files a lawsuit against his being suspended for forty-five days without a hearing .

December 20, 2017: Fatima files another FERPA complaint with the US Department of Education, having just discovered that many more details about her child’s records, some of them falsified, are contained in the new Moskowitz book. In her new complaint, she references her earlier complaint, and writes that because of the inordinate delay of more than two years, the harm to her child ‘s privacy has been seriously aggravated.

February 16, 2018: Fatima receives a letter from Frank Miller of the US Department of Education saying they had now received information from Success regarding her first complaint, filed more than two years ago, and in a few weeks would let her know the results. He doesn’t mention the second complaint, though Fatima responds with the information about the Moskowitz book that has since been published. She doesn’t hear back anything.

October 20, 2018: Three years have lapsed from the date of Fatima’s original FERPA complaint, without any action taken by the US Department of Education.

November 26, 2018: The Inspector General’s audit is released, showing the Department is years behind in responding to FERPA complaints, and demanding a corrective action plan.

December 13, 2018: I have a conversation over the phone with Michael Hawes, who by then has been appointed Acting Director, Family Policy Compliance Office, and is about to be named Director, Student Privacy Policy Office.

Hawes says they will soon release a “findings letter” about Fatima’s FERPA complaints. He points out that his office had already posted a 2015 “technical assistance” letter to the Virginia Attorney General, saying that a school’s desire to defend itself against accusations by parents or students is NOT a legal justification to disclose confidential information from their records without their consent. As that letter points out, “the Department has declined on previous occasions to extend the doctrine of implied waiver of the right to consent when parents or students have shared information with the media or other members of the general public due to the harm that this would cause to students’ privacy interests.”

December 20, 2018: The US Dept of Education responds to the IG audit, promising to take various steps to speed up its responses to complaints.

January 2019: Michael Hawes is appointed Director of the Student Privacy Policy Office.

January 9, 2019: Rachael Stickland, co-chair of the Parent Coalition for Student Privacy, and I have a conversation with Michael Hawes about the many positive changes he plans for the office, including making their response to FERPA complaints more speedy. I suggest that they post more of the results of their investigations and findings letters online, so that the public can see they’ve made progress and can better understand what sorts of actions violate FERPA; this might also help prevent future infractions of the law. I again bring up Fatima’s complaints, which are still waiting for resolution more than three years later they were initially sent to his office. He assures me that the results of their investigation into both of her complaints will be within a few weeks or months.

April 16, 2019: The US Department of Education awards $9,842,050 to Success charter schools. According to Success, this will help fund the opening of four new elementary schools, one new middle school, and one new high school, and help them expand four existing middle schools. By this point, there are at least four different pending federal lawsuits against the Success chain for violating the rights of students with disabilities.

April 22, 2019: Another lawsuit is filed vs Success Academy, for forcing a special needs student out of its schools, as well as calling Children’s Services on the mother, and forcibly removing the student to a Brooklyn police station. In this case, Ann Powell, Success Academy spokeswoman, writes in an email, “the lawsuit is completely without merit and contains numerous factual inaccuracies” but said she could not go into detail due to federal privacy laws.”

May 2, 2019: Michael Hawes announces he is leaving the US Dept of Education to join the Census Bureau. He writes me, “Re the Geidi case, it’s cleared my office, but is being held for review by my leadership. I’m hoping I’ll be able to issue it before I depart.”

May 4, 2019: In response to the allegations made by Lisa Vasquez, Success Academy releases details of her child’s file to reporters, and claims that they have the right to do so in order to “rebut false claims without violating FERPA.”

May 9, 2019: Lisa Vasquez files her FERPA complaint against Success Academy. (see below).

May 10, 2019: Michael Hawes’ last day at the US Dept. of Education. Needless to say, the results of the investigation into Fatima’s complaint against Success Academy violations of her son’s privacy have still not been released.

May 9, 2019

U.S. Department of Education

Family Policy Compliance Office

400 Maryland Ave, SW

Washington, DC 20202-8520

By postal mail and email to: [email protected]

My name is Lisa Vasquez and I reside at the following address: [redacted]. I am the mother of Jazmiah Vasquez, my daughter who has been diagnosed as autistic and is seven years old. Jazmiah was a student at Success Academy Prospect Heights, 760 Prospect Pl, Brooklyn, NY 11216 from September 2017 to November 2017.

The principal of the school at that time was Sydney Solomon. The principal now is Darielle Petrucci. The CEO (or Superintendent) of the Success Academy Network is Eva Moskowitz, whose office is located at the following address: 95 Pine Street, Floor 6, New York, NY 10005.

On May 4, 2019 , the NY Daily News ran an article about the fact that my daughter was pushed out of this charter school and still has not received a placement in a school that can provide her with the intensive services that she needs. https://www.nydailynews.com/new-york/education/ny-18-month-wait-school-disabilities-20190505-kfmsidunyjhzfmmkfvve2ylsv4-story.html The same day, the media outlet Chalkbeat also ran a longer story about her predicament. https://www.chalkbeat.org/posts/ny/2019/05/04/how-special-education-failed-jazmiah/

As the Chalkbeat reporter Alex Zimmerman wrote, Success officials showed him confidential records from my daughter’s file: “Success officials provided detailed records of Jazmiah’s time at the charter network, including progress reports, contemporaneous notes from multiple educators and psychologists, and a copy of her learning plan.”

On Twitter, the reporter exclaimed at the level of detail he was provided: “The way Success responded to my questions shocked me. They turned over detailed records of Jazmiah’s time at the school, including progress reports, contemporaneous notes from multiple educators and psychologists, and a copy of her learning plan.” https://twitter.com/AGZimmerman/status/1125405362709049344

At no time did I provide my consent for the school to release any of this information – and yet Success Academy officials claim that it was their right to do so. Here is an excerpt from the Chalkbeat article:

Ann Powell, Executive Vice President of Public Affairs & Communications at Success Academy Charter Schools, defended this disclosure. “It is our position that we are allowed to rebut false claims without violating FERPA when a parent has chosen to go the press but our critics don’t accept that position,”

As also noted in the article, Success Academy is a serial violator of students’ privacy rights; see the FERPA complaint filed by Fatima Geidi , submitted on Oct. 30, 2015, more than three years ago, about how Success Academy CEO Eva Moskowitz shared details of her son’s disciplinary records with reporters: https://nycpublicschoolparents.blogspot.com/2015/10/ferpa-complaint-from-fatima-geidi-to.html Here is an article about this: https://slate.com/human-interest/2015/10/success-academies-eva-moskowitz-published-a-students-disciplinary-record.html In that article, Ms. Moskowitz was quoted as follows:

“The First Amendment limits a person’s ability to use privacy rights to prevent others from speaking. When somebody chooses to make statements to the press, they waive their privacy rights on the topics they have discussed, particularly when, as here, those statements are inaccurate.”

Yet there is no such waiver or provision in FERPA. Ms. Geidi’s complaint still has received no response from your office though it was submitted three and half years ago, even though she has heard that an investigation was launched and completed. Because of this undue delay, Success Academy officials apparently assume that they do not have to follow the law.

This disclosure by Success Academy of my daughter’s education records is an egregious and willful violation of both FERPA and IDEA. I urge you to take action in an expedited fashion to alert school officials to these repeated violations of the law and to exact punitive damages.

I certify that this information is accurate and true to the best of my knowledge.

Signed Lisa Vasquez, May 9, 2019